PilotStudy-Group:Orquesta-AnthonyKilman

From CS 160 Fall 2008

Contents |

Write-Up

Introduction

System being evaluated

Introduce the system being evaluated

The system to be evaluated for this Pilot Usability study is Orquesta: a serious game which presents an entertaining means to learn beat mixing, an essential skill for any DJ. For the aspiring DJ, using professional music software can be quite the overwhelming experience. The interface can be exceedingly complex, and the software itself tends to be quite expensive. Orquesta provides a simple and fun interface which allows it's users to learn how to mix beats, without the hassle of the convoluted interface and the hefty price-tag. Orquesta's scoring mechanism, encourages the user to mix tracks into a composition “on-beat”, providing an additional incentive for the user to test their beat mixing “skillz.” This scoring mechanism, in tandem with the emulation of tasks achieved with traditional DJ software makes Orquesta an entertaining, and cost-effective solution to teaching the skill of beat mixing.

Purpose

State the purpose and rationale of the experiment

In order to gauge our team's progress with the current iteration of Orquesta, we will each individually conduct a Pilot Usability study with new participants to determine what modifications are necessary for the next iteration. By utilizing participants who are new to the interface, evaluations will have the fresh perspective of a new user in hopes of uncovering potential usability issues we might have missed since the last iteration. To achieve this goal, we will ask each participant to complete three primary tasks and create a log of that user's reactions as events while completing those tasks. This data will then be further analyzed in hopes of uncovering future usability issues.

Implementation and Improvements

Describe all the functionality you have implemented and/or improved since submitting your interactive prototype

Most of the changes we have made to the Orquesta interface were based on feedback from the Heuristic Evaluations and the Interactive Prototype presentation. In the same vein, our improvements since then can be divided into 4 categories: (1) user control and freedom, (2) flexibility and efficiency, (3) visibility and system status, and (4) consistency and standards.

(1) The User Control and Freedom category included mostly anticipated updates that were a consequence of the last assignment being a prototype. One particular bug that as a group we were aware of, was that upon selection of two of the same instruments in the instructions screen of Practice Mode would bring the user back to the instructions screen of Campaign Mode. This issue was promptly corrected by providing it's intended functionality. As per our evaluator's comments, we've also enabled the user to change the volume of a particular track by clicking on the desired location on the volume slider. Finally, an another anticipated update, we've enabled the user to retry any of the levels that they have already completed by including an additional Level Select Screen.

(2) The Flexibility and Efficiency category included only a single update to the interface. The addition of a Level Select Screen also addressed issues mentioned in the previous category. But for this category it was confusing to our evaluators how a player would return to a level upon completion. Our original rationale for not including this option was the fact that most users don't really want to replay levels, and their primary objective is to complete the game. But as a consequence of our application being a serious game, enabling users to replay levels is a very necessary component. For the purposes of practice or obtaining a higher score, as a group we were reminded that the objective is not to create a game, but to create a game that would effectively teach beat mixing.

(3) The Visibility and System Status category was where we required the most modifications, particularly removing save/import functionality and general user notifications for system status. As a combination of the last assignment being a preliminary prototype and components of the interface genuinely needed improvement, most of our group's attention was focused here. There were several issues that were listed associated with saving and importing compositions for the last iteration. In the interest of time we chose to discard the both functionalities entirely. Our rationale was that we are first and foremost attempting to teach beat mixing to the novice user and perhaps reinforce concepts for the intermediate user. What we needed to shy away from was emulating another DJ application entirely, and saving/importing was the opposite of our primary objective. In regards to System Status and it's visibility to the user, we have made several relatively small but necessary updates. Those include: display of what mode the user is currently in, making it more clear what objectives are required to progress to the next level, and an indicator to assist the user in determining precisely when they are required to switch a track on or off. The transition assist feature was included because we felt that for the beginner, it might not be intuitive exactly when they need to switch a track on in Campaign Mode. With the track titles highlighted during a valid transition interval, it is plainly obvious when the user can successfully switch a track on.

(4) The Consistency and Standards category included refining the functionality of the Quit button, updating all buttons in the game to be consistent, and disabling the option to proceed to the next Campaign level if a user had not passed the previous level. We removed the Quit button in the main screen because we resolved that most people to use the application would use it embedded in a web page as supposed to a standalone application. We further noted in preliminary instruction screens for Practice and Campaign mode that clicking the Stop button in the following screen was the equivalent of quiting the level currently being played.

Method

Participant

Participant (who -- demographics -- and how were they selected)

The participant in this usability study will be a relatively experienced DJ, in hopes of proving that our application accurately can reinforce beat mixing principles for the intermediate DJ. As a consequence of other potential interview candidates being unavailable, this is a participant that has already participated in the Low Fidelity study. In regards to demographics, this is the participant referred to as Jane in the aforementioned study. She is a fairly experienced DJ, who is active in the field every week or so (she is also a student). For my Pilot Usability study, I was aiming for a more experienced candidate since my other teammates were likely to interview less experienced subjects in regards to beat mixing. This will hopefully give our results for the next iteration a bit of balance when we sit down to move ahead with the necessary changes.

Apparatus

Apparatus (describe the equipment you used and where)

The Pilot Usability study will be conducted on my personal laptop within the graduate lounge of Cory Hall. My school laptop is a Lenovo Thinkpad T60, and Jane will be running Orquesta via Flash in a virtualized Windows XP environment. Although the environment is virtualized, the test computer is relatively fast and in regards to design and testing has not shown any difficulties thus far. To allow for more freedom of movement, and external mouse will be used as supposed to the laptop's trackpad. The session will be timed as well, and critical events will be logged manually via pen and paper. The study will be conducted in the graduate lounge of Cory Hall, since this is a relatively quite environment, and the use of Orquesta requires the user to pay attention to perceptible variations in beat. This environment also made it easier to notice events from Jane in person and also for recording critical events.

Tasks

Tasks [you should have this already from previous assignments, but you may wish to revise it] describe each task and what you looked for when those tasks were performed

Complete Tutorial Mode

Again, our tasks reflect the logical progression a user should take in experimenting with the Orquesta interface. Primarily, a user who is unfamiliar with the interface must acquaint themselves with each functional component of Orquesta and complete Tutorial Mode to do so.

This is likely the most important component of the interface because it teaches a new user how to utilize our interface. So any confusion, or critical events that occur here are extremely important and heavily reflect on our design. Most likely after the user is complete with this task, we'll chat more about the effectiveness of Tutorial Mode to make sure everything is crystal clear or to assess what should/should not change.

Attain power-up in Campaign Mode

After having gone through Tutorial Mode the will want to “test [their] skillz” via Campaign Mode. En route to completion of the 3rd task, the user is should be able to attain a “power-up” which represents successfully making more than two consecutive transitions. Not only does this encourage the user that they are successfully making transitions (whilst scoring double the points) but this is also a familiar “power-up” as it borrows from Guitar Hero's power-up mode.

Here we are looking to see if the user is successful in utilizing the primary interface. If a user can obtain a power-up, then they have caught on to the principles that Orquesta is trying to teach. It takes both the attention to the transition assistant as a visual cue, with paying close attention to the beat to successfully make one transition, let alone more than two in succession. A key point in this task that will be analyzed is, the interval between successful transitions. The overall task itself may take several attempts, especially for a new user to the interface. So this interval time should be a measure of progress for the user.

Beat Level 1

Once the user has completed Tutorial Mode and obtained at least one power-up, the user is sufficiently prepared to complete the first level of Campaign Mode. This illustrates a complete understanding and familiarity of the interface (as some of the designers have had some initial difficulty in completing the first level as well).

This task really builds upon the previous task, indicating that not only can the user make transitions in succession to obtain a power-up, but can also recognize they have to complete enough transitions to complete the objectives for that level. This entails recognizing that you must stay below a number of strikes and complete the task before the timer runs out. Here we will focus on the user's understanding of the strike notifications and timer interface. If there is any confusion in this area, this is a red flag in regards to user notifications.

Procedure

Procedure describe what you did and how

First and foremost, the precepts of the study were made very clear to the participant. Jane will be completing three different tasks, and that she will not receive any help even if there are difficulties. Additionally, I expressed to Jane that this is done in hopes to identify critical issues in the interface, and that her reactions will be analyzed for improvements for the next iteration.

Complete Tutorial Mode

First, I described the task of completing Tutorial Mode to Jane omitting details as to exactly how this task is to be achieved. Again, this completion of Tutorial Mode is likely to be the most important task for beginners, and reactions observed in the event log will be analyzed in greater detail here.

Upon completion of Tutorial Mode, the user will have familiarized themselves with the following basics of Orquesta: how user may switch tracks on/off with track assist, scoring basics, offbeat transitions resulting in “strikes”, time limit associated with each level, objective associated with each level, individual track volume adjustment, and the restart button.

In the same vein, I asked Jane to briefly describe each function of the Orquesta interface as it was presented in the tutorial. Upon difficulty of describing any of the above basics, Jane will be asked to complete the tutorial again to potentially isolate components of the tutorial which were unclear.

Attain power-up in Campaign Mode

Similar to the previous task, the general goal behind this task is explained to Jane. In order to obtain a power-up, Jane must make at least 3 consecutive transitions. Additionally, it was made clear that this task will likely take several attempts. The results from this task will provide valuable data allowing the interval between transitions to be analyzed. This analysis will show progression in learning how transitions are successfully achieved in Orquesta.

Upon completion of this task, the user will have learned how to: select which level (if previously completed) to play, select tracks for the first level, and make more than two consecutive transitions. At the end of this task, we will discuss the effectiveness of the track assist and whether this did in fact assist her performance.

Beat Level 1

Again, the high level goal behind the task is explained to Jane. The completion of the third and final task demonstrates a complete knowledge of the interface. This includes an understanding of how to properly switch on a track, how this avoids strikes, and how to complete the objectives explained at the beginning of the level. Outside of this framework, no other information was given to Jane.

At this task, the user should be mostly acquainted with the interface. This task is really to demonstrate complete knowledge of the Orquesta interface thus far. Additionally, this task may be combined with the previous task if the user successfully completed more than two transitions and ends up finishing the level in this run. Similar measurements in the previous task will be taken as well.

Test Measures

Describe what you measured and why

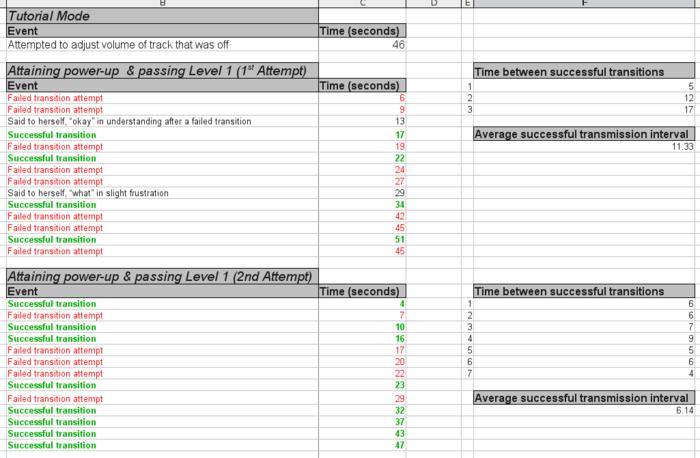

For each of the tasks, the user's interactions with the application were recorded. By examining the event log and the critical events themselves in detail, we can gain further insight into exactly what is going through the user's mind and how this effected their completion of the tasks. For completing Tutorial Mode, there aren't any quantitative measurements outside of the event log itself. In the last two tasks, the number of attempts to complete a power-up and complete a level will be logged. Additionally, the interval between success transitions will be plotted as a function of the number of transitions. This is to illustrate the learning curve of using the Orquesta interface.

Results

Results of the tests

The results of the Pilot Usability study may further be broken down into the three tasks presented to the user; results pertaining to (1) Tutorial Mode, (2) achieving a power-up, and (3) completing level 1. Overall the results indicated that any necessary fixes we will likely make in the future are for the most part cosmetic. At the same time, with Jane the results may be a bit slanted as she is anything but a beginner when it comes to beat mixing. This in mind, a further discussion of the results of the three tasks is in order.

Tutorial Mode, as anticipated was a relatively straight-forward task. Although it was not a difficult task, the results in this study would weigh heavily on any decisions to make further modifications in the next iteration. In regards to event data that was obtained, one item that should be emphasized is when a track is actually on or off. The particular event that indicated this, was that I had noticed Jane attempted to adjust the volume bar on a track that was not on. After the session, I asked why she did this and she told me that she wasn't sure that the track was playing. This is a clear indicator that we need further visual notification that a track is playing, and perhaps grey-out the volume controls to further emphasize the fact.

As a category for the results of the study, achieving a power-up and completing level 1 can be combined as they can be completed in the same trial. Both tasks took two separate trials to complete for Jane, and the data obtained reflected the expected learning curve for the interface. Additionally, the time it took for Jane to learn how to efficiently transition between tracks was relatively quick as she is a fairly experienced DJ. I had anticipated several trials before completing that last two tasks, and she managed to do it in two. After the tasks, we discussed the interface further. Jane was under the impression that she was required to switch the track off on beat as well. We haven't made that a requirement, but this will definitely be a consideration once we as a group aggregate our data to determine what work needs to be done.

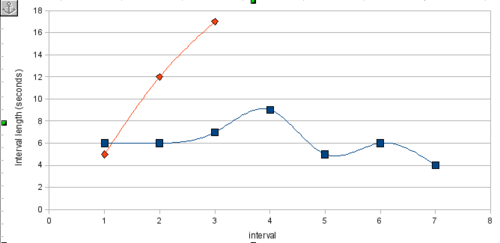

The graph shown below (Figure 1) illustrates the rate at which Jane was able to effectively learn the interface. Although I would have preferred more data points, she learned very quickly, and we could only gather two trials before she completed the last two tasks. The number of trials illustrates that more advanced users or those more well versed with beat mixing are likely to grasp the concepts Orquesta is trying to teach much more quickly. The red and blue trend lines show the length in intervals between successful transitions, for the first and second trial respectively. Note that the number of data points on the first trial was limited as a consequence of too many strikes causing the user to fail on the first attempt.

Discussion

What you learned from the pilot run (1 page) what you might change for the "real" experiment? what you might change in your interface from these results alone? If you'd like, you may include results and assumptions from other group members' tests here as well.

The Pilot Usability study provided valuable insight into what needs to be updated for the next iteration. From the data gleaned from this study, we can assert that: (1) Orquesta needs to make it's status updates more visible to the user, (2) Tutorial Mode needs to further explain the interface, and (3) we should consider requiring the user to switch an instrument off “on-beat.” These assessments are based primarily on the data from this study, which does not necessarily mean that as a group we are going to definitively make these updates in the next iteration.

Orquesta should have it's system status made more visible to the user; specifically a more visible indicator should emphasize when a track is on or off, and strikes should be made more visible to the user. From the tutorial portion, Jane attempted to adjust the volume of a track that was already off. Though this could be dismissed as simply part of being a new user to the Orquesta interface, we would really like to take any guessing or recall out of the equation. This in mind, perhaps the interface for the track that is on can be highlighted with a blue glow to indicate the track is on, and then grey-out the track interface once the track is off. Strikes, or attempted transitions that were made “off-beat” should be discouraged. Although the breaking record is visible, Jane commented that it should be a bit more obvious to a new user that they have done something they should not have. One suggestion could be tinting the track control red for a duration to indicate the failed transition.

Tutorial mode should be more explicit when it comes to explaining the interface. Particularly, it should explain each and every component of the interface so that there are no questions in regards to functionality. This can most easily be achieved by additional screens explaining components of the interface in further detail, such as the track assist feature, when tracks are actually on or off, and exactly what is possible for tracks that are on or off.

Finally, we should consider requiring the user to switch tracks off “on-beat” as well. This was a suggestion from Jane, as she was under that impression even after the tutorial (which is another indicator we need to elaborate in Tutorial Mode). For an experienced DJ, this is intuitive since a transition in it's basic form is switching a track on or switching a track off. Though depending on results from other group members, this may only increase the difficulty of our last two tasks with less experienced test subjects.

Appendices

Materials (all things you read --- demo script, instructions -- or handed to the participant -- task instructions)

Instructions were verbally given to the participant, and these are documented in the Procedure section above.

Raw data

Figure 2 contains the incident data that was transcribed during the usability study. From a general standpoint, I was hoping to use the interval length in seconds between successful transitions as a benchmark of the user learning the rubric of the interface. This confirmed my initial expectations that as we've seen in the Model Human Processor paper, that repeated tasks take significantly less time to accopmlish.

Figure 3 shows a consent form borrowed from a previous semester.